I’ve been reflecting on a thoughtful piece by Martin Chesbrough regarding our relationship with AI—specifically, whether we should view it as a Tool, Team Mate, or Thinking Partner. Martin caught my attention immediately with a claim that AI argued with him—and won.

My internal response was a bit different. I suspect he didn’t actually argue with a machine; rather, he argued with his former self, aided and abetted by AI—and he was right.

The Anatomy of an “Argument”

I’ve had genuine arguments with AI before, but usually, those are about the system’s opinions on what it is doing, not the facts we share. As Daniel Patrick Moynihan famously said, “Everyone is entitled to his own opinion, but not to his own facts”. To argue about facts is housekeeping, to argue about opinions is dialectic.

In Martin’s case, the “argument” was about the data: the AI essentially told him that while his new concept was interesting, the evidence for it simply wasn’t in the data. In drawing any conclusions we have to be careful here because there are “many people in this marriage”. When an AI responds, it is a cocktail of:

-

The Prompt: This is your expression of focus, setting the boundaries for the AI’s interest.

-

The Content: In my work with STPrism, I use NotebookLM specifically to make the source data a fixed parameter rather than a variable.

-

Prior History: The “train of thought” in a chat progressively filters and prioritizes certain interpretations. In human terms, we call this GroupThink.

-

Chance: There is always an element of probabilistic randomness at play.

I have to wonder: when did Martin’s “new idea” actually become new? Was it truly novel, or was it something he had forgotten that the LLM simply retrieved by “housekeeping” (archetypical grunt work).

Our Three Reactions to AI Responses

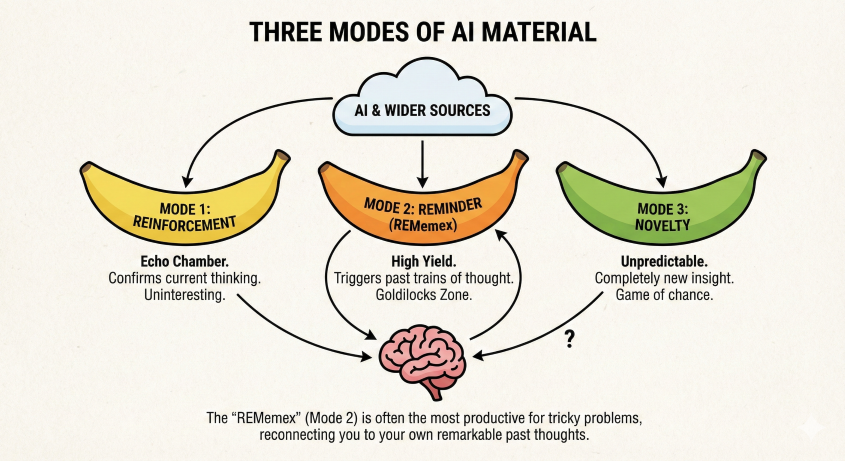

I see AI responses falling into three distinct responses (or modes) relative to the user:

-

Mode 1 (Reinforcement): The AI simply echoes what we are already thinking. This is uninteresting because it just reinforces our own echo chamber.

-

Mode 2 (The Reminder): This triggers something we previously thought or read. These ideas resonate because of a prior, perhaps hazy, familiarity.

-

Mode 3 (Novelty): This is a completely novel insight. It’s a game of chance where the machine pulls random threads from wider sources.

The balance between Mode 2 and Mode 3 depends entirely on your experience. A beginner sees novelty (Mode 3) everywhere, while an expert sees reminders (Mode 2). I find Mode 2 to be the “high yield” zone because it breaks through your “headful limit”—the capacity of your own active thinking restricted to your mode 1 thinking—using ideas you’ve already pre-validated. Anything novel requires the work of understanding the gist and aligning to what you already know.

Memories of the Future

This value in mode 2 exploits how our minds actually work. In Scenario Planning, we use scenarios to sensitize the brain to specific themes. David Ingvar called this the “Memory of the Future”. It’s that everyday experience where you look up an “obscure” word and suddenly start seeing it everywhere; the world hasn’t changed, but your filtering has.

To find innovation, I think we have to act like astronomers during a solar eclipse. They use the eclipse to dampen the blinding rays of the sun so they can actually see the outer structure. We must dampen the “obvious” consensus to hear the weaker, proximal signals. These ideas occupy the “Goldilocks position”: close enough to the mainstream to be valid, but different enough to bring a new perspectives.

The Pollution Problem

A major finding in my STPrism research is the effect of pollution. If you stay inside one train of thought for too long with your prompts, the AI narrows its responses and suppresses variety. Divergent opinions are progressively eased out. Often, a “naïve” or fresh user session outperforms a “trained” one because it isn’t carrying that baggage. Gemini helpfully provided guidelines

Grunt Work and the “ReMemex”

I still value AI for the grunt work. For STPrism, I’ve used Google’s AI to develop the REGEX needed to collect and edit data sets. If you’re technical enough to know you need a sed/awk script but amateur enough to need the manual, getting it right the first time is a massive productivity leap.

I like to think of this use of mode 2 responses with A.I. as a ReMemex. Vannevar Bush first proposed the “Memex” in 1945 as a device to connect documents and bypass the friction of index cards. In mode 2, AI acts as this “reminder machine,” reaching back to trigger perspectives we’ve embraced in the past but which have slipped below our conscious threshold.

Ultimately, when Martin gets excited by an AI response, it might not be that the system did something remarkable—it’s just that it triggered a remarkable train of thought that was already sitting in Martin's own brain.

Disclaimer

Of course a simpler explanation may come from Martin (what he actually did, and what actually happened). This piece of speculation, involving explanation and hypothesis, is a necessary justification of how the A.I. might work, what we might expect and what we can validate. Without this A.I. is just an expensive fantasy.